M0o+ Nature's Bounty

How M0o+ solves Nature's Bounty

After tackling Hungry Cattle, Nature’s Bounty is next on the hit-list.

Lots of videos/pictures in this post, because I think it helps a lot with explaining how it all works.

The challenge is pretty daunting: The robot must find an apple tree, and pick 12 apples from it, three times in a row, in 5 minutes or less. That’s an average of just over 8 seconds per apple.

Tree

Before picking apples, there must be a tree with apples to pick.

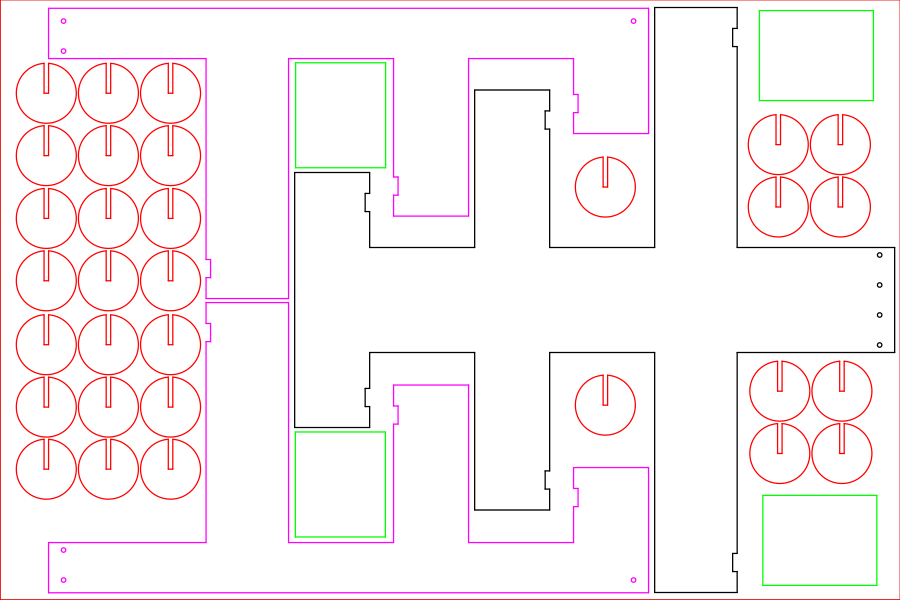

I took the diagram from the Pi Wars website, and designed a tree and apples which would fit into a single 600x400 piece of 3mm laser ply, which is what we generally have available at Makespace.

There’s a couple of 3D printed bits to hold the top and bottom together.

To attach the apples to the tree, there are magnets embedded in the branches, and short pieces of coat hanger wire embedded in the apples. This gives a secure, somewhat flexible, and crucially quick to reset attachment for the apples.

I hacked another piece of coat hanger wire onto the end of the boom and had a go at picking off some apples:

I have plucked my first apples! Remote control, and I don't think the attachment is in the legal size range, but still 😁 #PiWars (⚠️loud noise warning⚠️) pic.twitter.com/NfSVnltBKE

— Brian Starkey (@usedbytes) February 20, 2022

First pickers

Early on I was considering a design which would use two vertical sticks to pick off a set of 3 apples at a time, but that didn’t gel very well with M0o+’s boom design, and also seemed quite challenging to fit within the size limits.

The boom was designed to be able to reach the top apple on the tree, so the question is what to attach to the end of it to pick apples?

After hooking up the levelling servo, the first thing I attached to the end of the boom was a simple cardboard hoop which actually worked OK for detaching apples:

I quickly ran in to two problems with this design:

- It’s very sensitive to the positioning relative to the apple.

- It’s very hard to fit it between the bottom of the apple and the branch below.

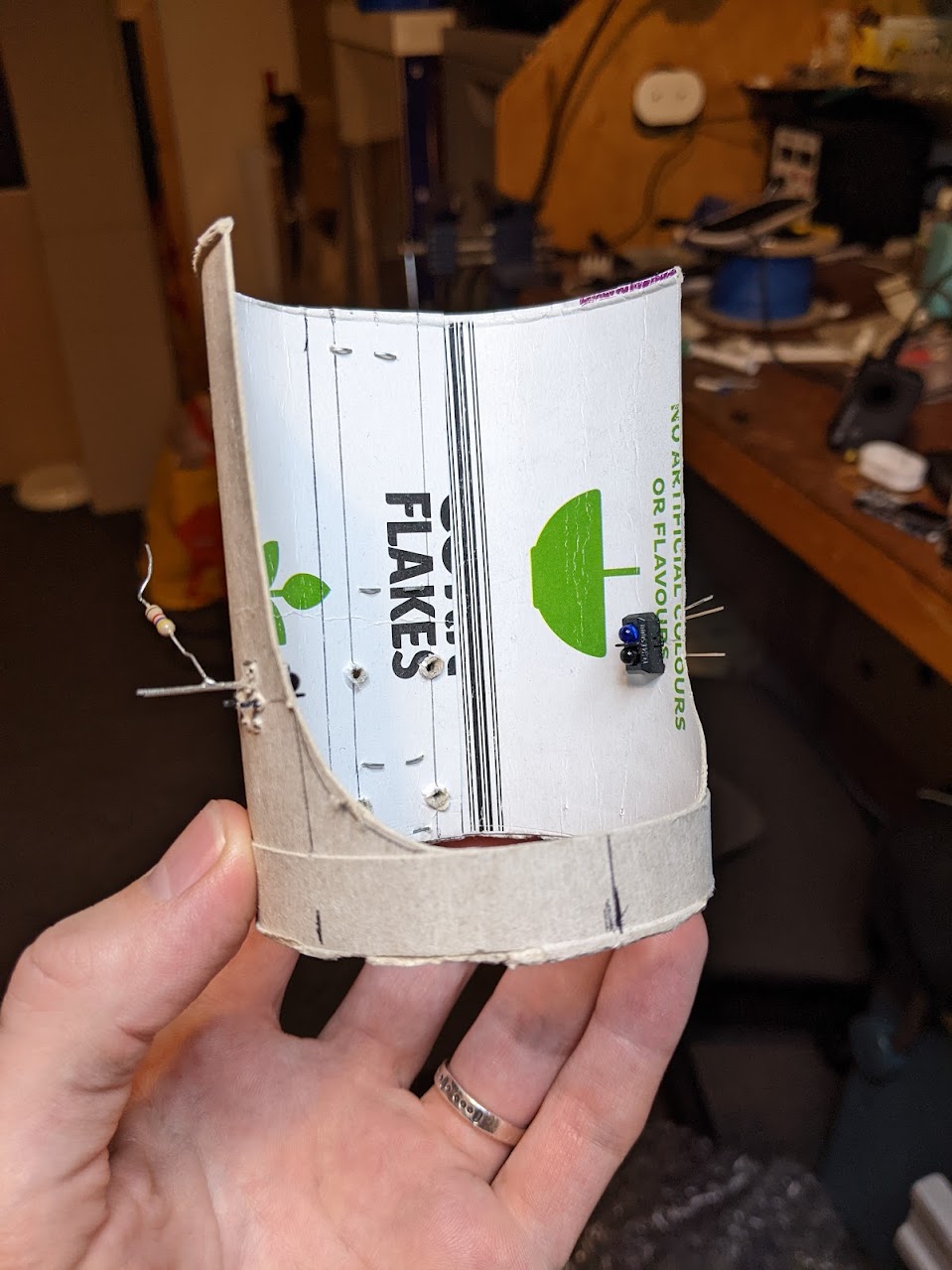

I tried addressing these problems with a refined design, using a thinner hoop to allow more space and adding infrared sensors to help detect when an apple is positioned correctly:

The result was worse in almost every way. The thinner hoop doesn’t reliably detach the apples, and the taller/bulkier sides just give more opportunity to collide with the tree.

I was really hoping to be able to use an entirely passive picker (because I was being lazy and didn’t want to design an articulated one), but alas something more complicated seemed inevitable.

Articulated Picker

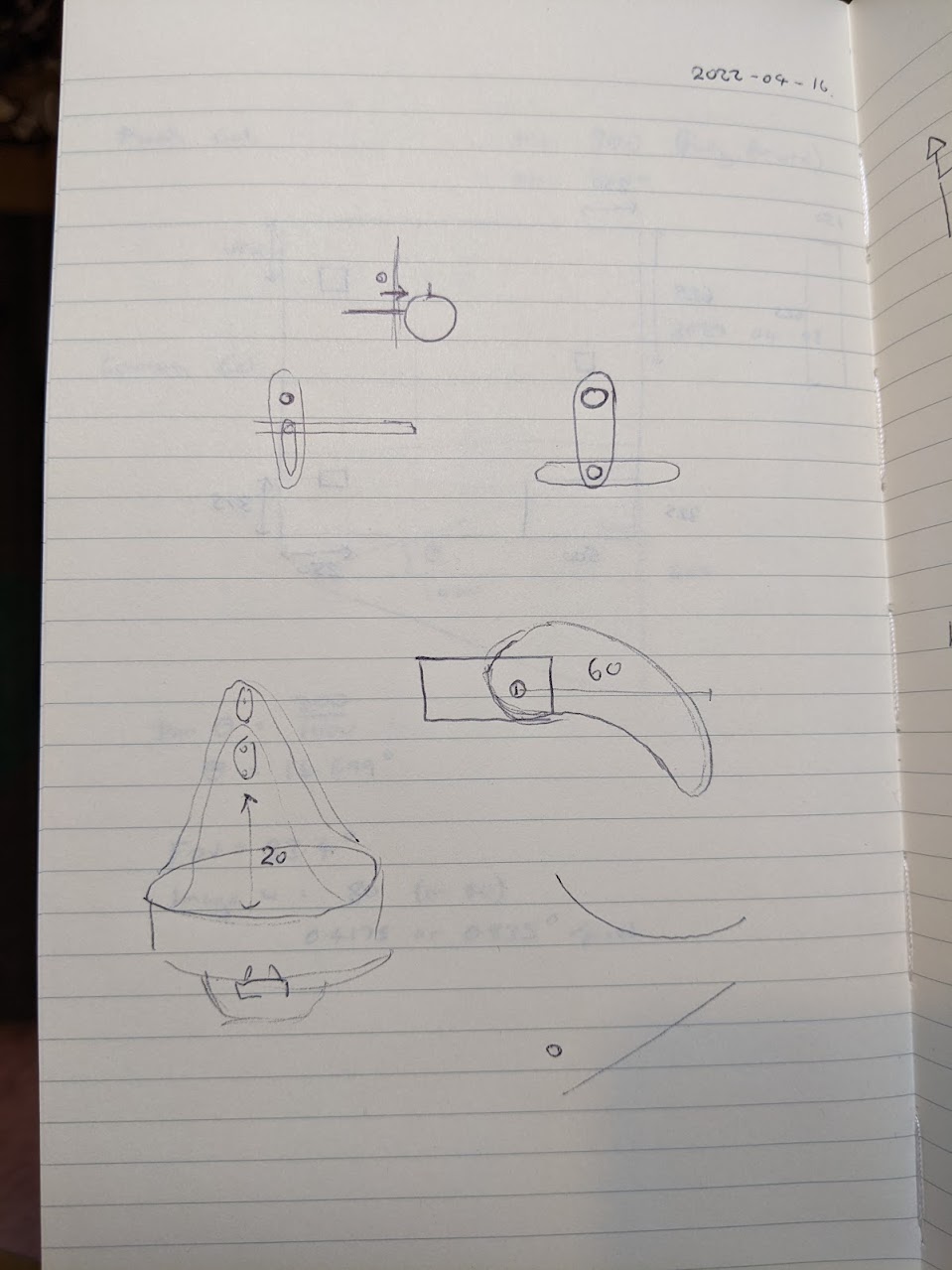

I spent some time head-scratching and sketching, trying to come up with a mechanism which could “shove” an apple to detach it from my tree.

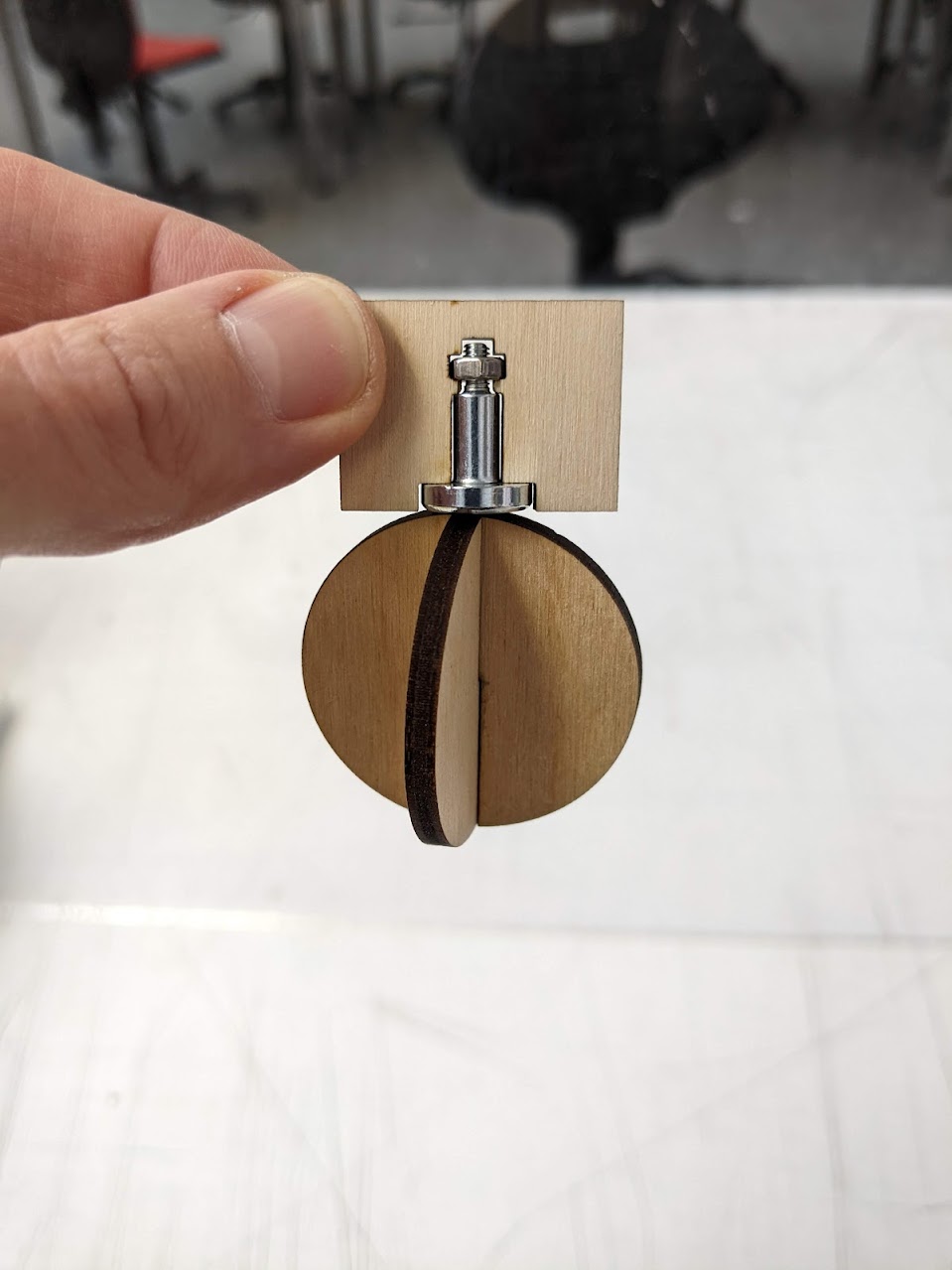

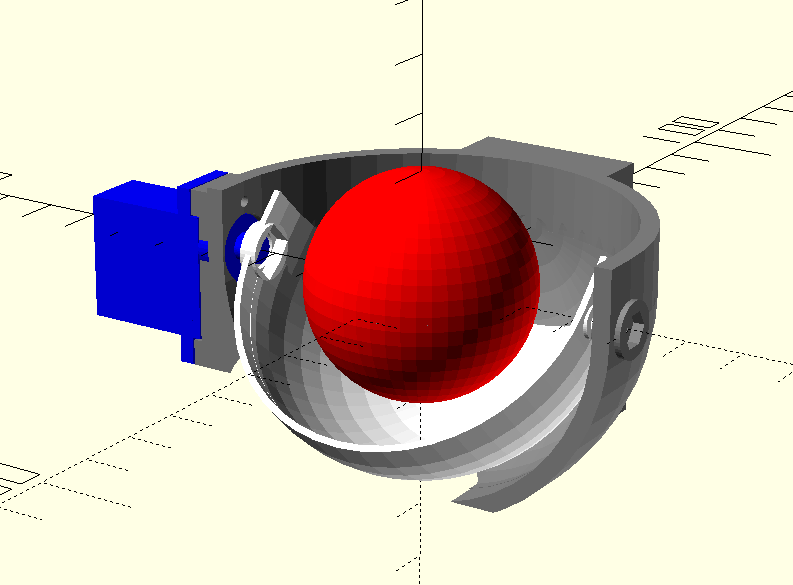

Eventually I settled on a design which uses a single servo to close a hemi-spherical “shell” around an apple, then pulls backwards to remove it from the tree, “catching” it in the cup that gets formed.

I’m quite proud of this design. When approaching the apple, the inner shell is completely opened, giving the maximum amount of space to manoeuvre. The shell closes to capture the apple, and holds it until the robot is positioned properly to drop it. Then, to drop the apple, the shell moves further, opening a hole at the bottom which the apple can fall through.

It works really well, and makes satisfying noises as the apple is captured and dropped.

I copied over the infrared sensor to detect when an apple is in the right spot for capturing, and coded up autonomous picking of two apples. This works, but it’s clearly too slow to meet the challenge timings:

Feels like decent progress being made on apple (or bauble?) picking, but beating the time limit is going to be impossible with this design 😫 #PiWars pic.twitter.com/i7NCBdK2P0

— Brian Starkey (@usedbytes) April 18, 2022

Bucket and Speed Optimisation

With the basics working, I needed a way to actually collect the apples and also try and speed up the collection.

The first obvious win from a speed perspective was to move the boom as little as possible - it’s much faster to drive the robot back and forth than it is to move the boom back and forth.

To catch the apples, I made a little wooden box which attaches to the front of the robot with magnets (being careful to stay within the size limits!), and this is where my real breakthrough came in terms of speed: The bottom apple is low enough down that it can simply be knocked off the tree by the back side of the bucket as the robot positions for the other two apples!

Here’s a very short video showing how the bottom apple is knocked off and how the bucket catches the ones dropped from the boom:

This saves a huge amount of time compared to moving the boom down to grab the bottom apple, and for the first time this made it look actually plausible to collect all of the apples.

Navigation

The next problem to solve is positioning of the robot relative to the tree.

I’ve got front and rear distance sensors, a heading from the IMU, and a camera, so the broad idea is:

- Drive parallel to the wall until the distance sensors indicate that the robot should be alongside the tree.

- Turn to face the centre (where the tree should be).

- Use the camera to align with the tree while driving towards it.

- Use the front distance sensor to stop at some distance from the tree.

- Pick the apples

In step 1, the distances can be tuned by hand to account for how well the robot does/doesn’t turn on the spot.

For step 2, I can paint the base of the tree red and re-use the “find the red blob” vision code.

The boom inverse kinematics controller makes it very easy to position the boom at the right height for each apple, and the infrared sensor on the picker can let the code know when it’s positioned correctly to close.

This actually went better than expected, and in around a day I had the robot moving around the arena and mostly managing to pick the first couple of branches clean:

Tuning, tuning, tuning

From here, it’s just a case of tuning, tuning, tuning.

I wrote a new “autonomous sequencer” which provides a sort-of generic autonomous task planner - which can do things like:

- Drive a heading until a distance sensor reads a certain distance

- Wait until the boom reaches some target before moving to the next step

- Drive towards a red blob

- Turn to a heading, reliably!

Turning to a heading in particular is harder than it might seem. A proportional controller mostly works well, but when the robot is very nearly facing the right way the motor drive isn’t enough to overcome friction. I couldn’t get PID controller to work by itself without adding overshoot or oscillation. I ended up with a PID loop with a few special cases to get something which works reliably on carpet and smooth surfaces, as well as at different battery levels.

The sequencer has been crucial to optimising the timing. I always combine boom positioning with the robot’s movement, so it’s effectively never sat still waiting for the boom to move (unless really necessary).

I also tuned the speeds to get the time down - the robot moves quickly for parts of the course where coarse positioning is fine, and then more slowly for the precise movements like picking an apple.

I also tweaked the bucket, providing a larger back face to more reliably get the bottom apple, and some cardboard sides to help guide the dropped apples in.

With all these optimisations, the robot can complete a full run of 12 apples in around 1m10s, which seemed completely outside the realm of possibility just a few days earlier. This puts 3 runs in 5 minutes firmly within my grasp.

Read the rules

With working code and a working robot, I set up at Makespace to record my runs, and was extremely pleased to catch a good run on my second attempt.

Unfortunately, when I got home and re-read the rules I noticed:

Your robot can be initially placed anywhere inside the Barn. Trailers can be outside the Barn if necessary. However, your robot must start facing the left-hand wall so that it must turn to “find” the apple tree.

Bother! I started facing right.

Thankfully, that didn’t pose too much of a technical issue because the new autonomous task sequencer makes it pretty easy to just change the starting direction, but it was a really disappointing setback! I thought I had the recording in-the-bag.

I had to wait a week for the next opportunity to re-film, and I learnt a lesson the hard way: read the rules carefully!